Here’s something nobody tells you about bad decisions:

Most of them weren’t made badly. They were set up badly — long before anyone sat down to choose.

The meeting happens. The options get laid out. Someone makes a pros and cons list. Someone else asks “what does the data say?” And then, usually, the room goes with whatever felt right to the most senior person before the meeting started.

The decision was already made. The process was theater.

This isn’t cynicism. It’s systems thinking.

The Real Reason Decisions Fail

When a decision goes wrong, we blame the choice. We should be blaming the map.

Every decision exists inside a system — a web of stakeholders, incentives, feedback loops, and constraints. Most people never look at the system. They look at the surface: Option A versus Option B, the spreadsheet, the risk register.

But the system is where the real answer lives.

Consider a $2M IT infrastructure decision I watched unfold in a conference room full of smart, capable people. They had data. They had options. They had a process.

What they didn’t have was a map of what the vendor actually needed from the deal.

The vendor was under margin pressure from a competitor. They needed a reference customer in a new vertical. They would have signed at 30% below the price on the table — and thrown in three years of priority support to close it.

Nobody asked. Nobody modeled the incentive.

They listed pros. They listed cons. They chose. They paid full price for a vendor who needed them more than they needed the vendor.

The decision wasn’t wrong. The map was missing.

What a Decision Map Actually Shows You

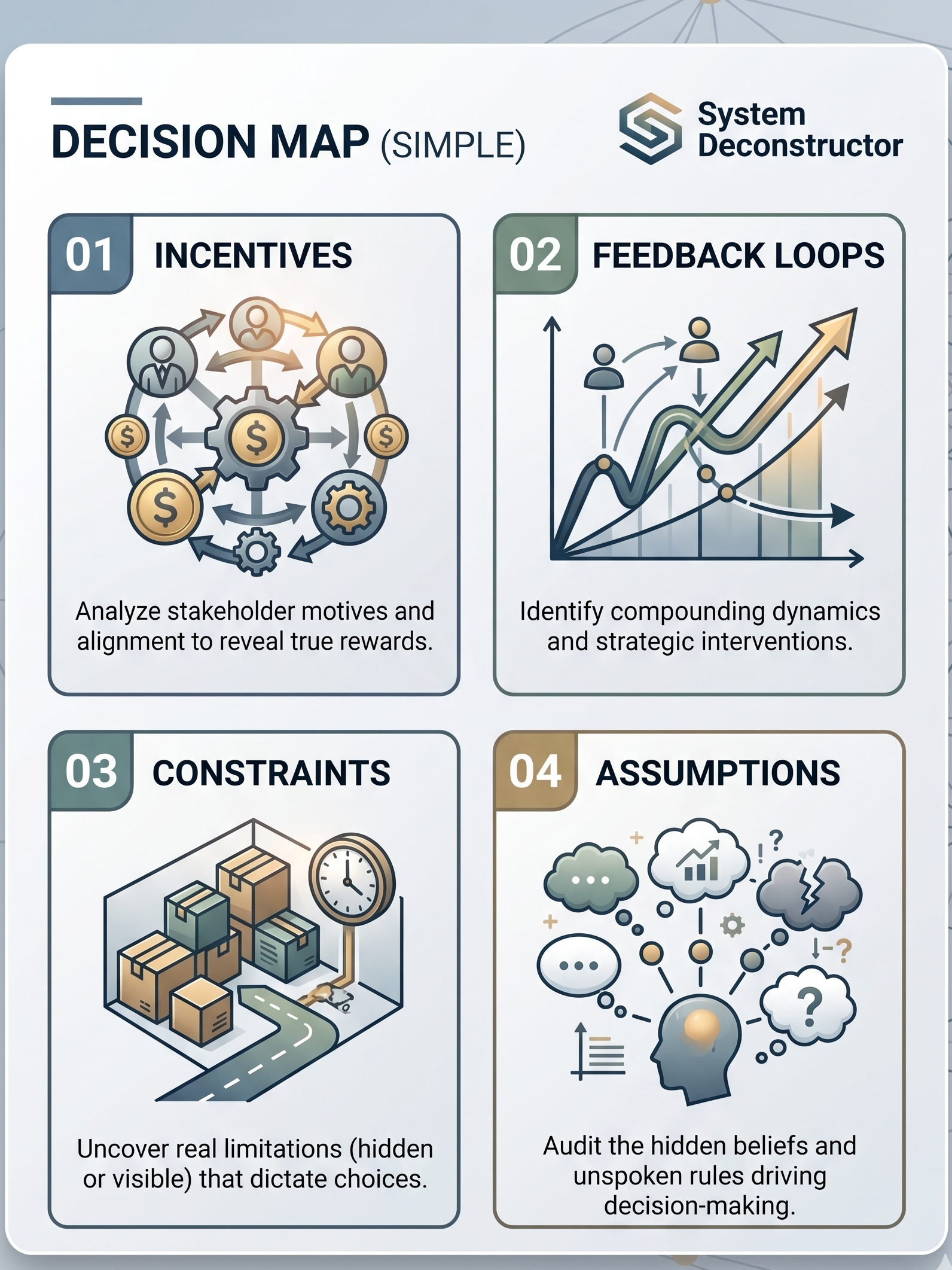

A proper decision map has four components. Most analysis covers none of them.

1. Stakeholder Incentives (Real Ones)

Not what people say they want. What they actually need.

The vendor needs margin. The internal champion needs a win before year-end review. The CFO needs to look fiscally responsible. The end users need something that doesn’t break on Friday afternoon.

These are four different problems wearing the same costume. If you optimize for one, you alienate the others. If you map all four, you find the move that satisfies enough of them to get to yes.

2. Feedback Loops

What compounds over time — positively and negatively?

The “safe” choice often looks safe in month one. By month eighteen, the technical debt from that safe choice has compounded into a rebuild. The “risky” choice that required organizational change in month one would have compounded into a competitive advantage by now.

Pros and cons lists are static. Systems move. The decision you make today creates the conditions for every decision you’ll make for the next three years.

3. Hidden Assumptions

Every decision rests on a set of beliefs the decision-maker has never examined.

“We need to move fast.” Do you? Or does it feel urgent because someone upstream is anxious?

“The market won’t pay more than X.” Based on what? A price test you ran two years ago in a different economic environment?

“Our team can’t handle the transition.” Have you asked them? Or are you projecting last year’s failure onto this year’s team?

Hidden assumptions are load-bearing walls in the architecture of your decision. Pull the wrong one out and the whole structure collapses — but you won’t know which one it is until someone maps them.

4. The Real Constraint

This is the most important and most consistently ignored element.

Every decision has one constraint that makes everything else irrelevant until it’s resolved. Not the most visible constraint. The one underneath it.

In the infrastructure example: the real constraint wasn’t budget or timeline or technical requirements. It was the procurement team’s relationship with the incumbent vendor — a relationship that made any competing bid feel like a betrayal rather than a business decision.

Fix the relationship dynamic first. Everything else becomes negotiable.

The Leverage Point

When you map the system — stakeholders, loops, assumptions, constraints — something becomes visible that wasn’t before:

The leverage point. The single intervention that changes the outcome without requiring you to fight the entire system.

In physics, a lever lets you move a heavy object with minimal force — but only if you place it at the right point. The same principle applies to decisions.

Most people try to push the whole system. The leverage point lets you move the one thing that moves everything else.

It’s almost always smaller than you expect. It’s almost never the most obvious thing in the room.

How to Find It

Ask these five questions before any significant decision:

- Who actually benefits from each possible outcome — and are their incentives aligned with yours?

- What are the feedback loops? What compounds over time if you choose A versus B?

- What are you assuming that you haven’t examined?

- What is the one constraint that makes everything else secondary?

- Where is the smallest intervention that creates the largest shift?

You don’t need a framework to ask these questions. But a framework makes you ask them every time — not just when the stakes are high enough to slow down.

What We Built

We’ve spent months turning this process into a tool.

Lever is a decision intelligence platform that runs any decision through the System Deconstructor framework — mapping stakeholders, incentives, feedback loops, hidden assumptions, and constraints — and surfaces one leverage point with a recommended first move.

It works on anything. Career decisions. Investment calls. Vendor selection. Organizational restructuring. Whether to start the company. Whether to end the partnership.

The system is always there. Lever makes it visible.

Early access is open now at theai-4u.com/lever.

The free tier gives you three deconstructions. No credit card. No commitment.

If you’re facing a decision right now that you can’t quite see clearly — start there.

Wave is a Senior Technical Orchestrator and AI systems architect. This post is part of an ongoing series on decision intelligence, AI agent deployment, and building profitable systems that genuinely help people. More at theai-4u.com.